Discussions have been swirling at the state and district levels about school data and accountability over the last few months.

Accountability, for those who may not know, is a term for measuring how well a school is doing and what should be done or celebrated about these rankings.

These discussions cut to the core of whether Colorado has a standards-based education system and whether the state will ensure that schools are accountable for supporting students to reach these standards. We know that standards matter from decades of research that setting expectations make a huge difference for student learning.

Does Colorado have a standard for what students should know and can do in reading, writing and math? And will the state of Colorado hold school districts accountable for these standards?

Will the Colorado accountability system go beyond labeling schools that are in the lowest 5-8% of academic performance? Should the accountability system identify schools that are making the biggest difference for students?

What’s good enough for our kids?

Colorado State Board of Education Decisions

The specific discussion at the Colorado state board meeting this month revolves around the distribution of the ratings schools received. In particular, the state board has been grappling with how to understand and communicate whether schools are where they need to be when fewer than half of all Colorado students met or exceeded expectations on the state assessment of English Language Arts standards.

There have been multiple levers the state has thought about to communicate the expectations around how schools support student learning.

- Overall cut scores: These set a baseline for how many schools are meeting the state’s expectations for “Performance” schools (the state’s current highest rating for schools which 72% of schools received in 2019). The state is grappling with whether this is the right distribution, and whether to add a “Distinction” category to signal the highest performing schools in the state. See A+ Colorado’s memo to CDE on this decision.

- Individual measures including “on-track growth”: The state has developed a new way to understand “on-track growth” — whether students are making enough growth to likely move up performance levels to meet grade level standard. The state has made a decision to schools are driving sufficient “on-track growth” if students are on track to move up one performance level every two years. The state has yet to release how many students will need to be on track for schools to meet the expectations (i.e. the indicator level cut score). While we think this decision is better than the original proposal of setting “on-track growth” as whether students are on track to move up one performance level every three years, we also think that it is highly problematic to combine students who are and are not yet on grade-level in a single measure.

- Set versus movable cut scores for achievement: The state has set its accountability cut scores based on a distribution. For example, for schools that are better than half of the other schools across the state meet expectations for that measure of academic achievement. In the past this decision has effectively set the expectation for schools that they work towards supporting their students. However, the state recently decided to reset the SAT cut scores for this year’s SPF.

On average students scored worse on the SAT this year than did their previous cohorts. Instead of holding the bar for schools that they needed to support students to reach the same levels as their peers from the last two years, the Colorado Department of Education (CDE) decided to adjust the 2019 SAT benchmark scores to a lower level. And while it is unusual for scores to move this much and it is unclear why this happened, rather than asking the questions of schools and districts, the state lowered the expectation. This raises big questions about whether any cut scores for schools are meaningful– and whether they signal a criterion-based expectations, or if we are simply concerned about schools doing better than other schools across the state. See A+ Colorado’s comments to the State Board on this decision.

Like we do for students, we should set high expectations for our schools. These should be meaningful and relevant. Schools that struggle with reaching this standard for most students should be provided additional support and interventions to ensure that students are reaching the standard. Read my recent statement at the Colorado State Board of Education here.

What is the bar for Denver Public Schools? Will Denver schools be expected to have students read at grade level?

Denver Public Schools recently launched the quality school task force composed of parents, school leaders, teachers and community leaders to help the district determine what should happen to the Denver School Performance Framework (SPF). The task force has had two meetings so far. While the state accountability debates have mostly been about more technical uses of data with the state ratings, the DPS SPF Committee has been learning about the background of the Denver SPF while also raising questions about the use and weighting of standardized test data for accountability purposes. See September committee public feedback form.

You can find a comprehensive background and history on the Denver SPF in our latest report here.

On the Denver School Board front, we were pleased to see all members of the Denver School Board question senior staff on what the early literacy scores grades for K-2 can tell us about students’ literacy knowledge.

DPS modified their SPF to include early literacy assessments three years ago with the hope that they could better determine which schools were more on track to get students reading at grade level.

Many of the questions by the board this month centered on what they can derive from these scores and where the district is making progress on supporting more students to read. These early literacy assessments were designed to give an early warning and on track to meeting the state’s reading standard as measured by CMAS.

District staff have recently admitted that the early literacy scores do not correlate directly with how the district or the state of Colorado determines 3rd grade literacy (CMAS).

Adding Early Learning test data was a well-intended policy change but ultimately a huge mistake because of these scores providing misinformation and eroding Denver’s effort to more accurately gauge how the district is making progress to reach the 80% proficiency level at third grade by 2020. The adding of Early Literacy data decision, based on interim assessments that are not designed for summative accountability purposes, incentivized schools to over-administer simplistic literacy assessments. Having data from these K-2 tests resulted in the SPF being inflated in many cases and misrepresenting how effective the schools were in supporting student literacy development.

We hope that the Denver Board of Education can learn from the early literacy assessment mistakes to create a more accurate, simplified and transparent SPF. It is essential that Denver and every Colorado school district have accurate and reliable measures for school quality aligned to our state academic standards, so that schools can be better supported to improve. Without a well-designed accurate compass, we will never get to the destination that our students deserve.

Why does Denver and other districts need a better SPF than the current one?

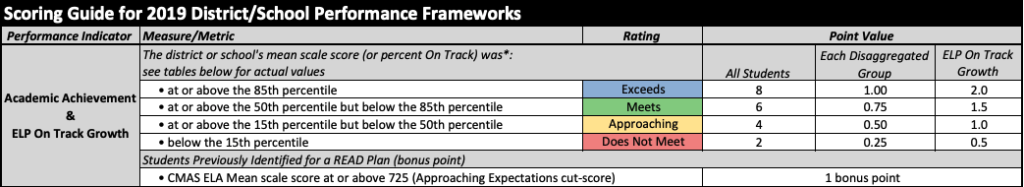

Chart of DPS vs CO framework (taken from A+ report)

Denver was the first district to have its own school rating system or School Performance Framework. Other districts are now developing accountability systems through pilot programs like S-CAP and an additional pilot program developed during the 2019 legislative session.

Learning the lessons and understanding the differences between Denver and the state’s SPF is critical as we move forward. Denver’s SPF is designed to give greater weight to growth over proficiency so that schools that are doing a better job at getting students to grade level can be more easily distinguished. The state also gives greater weight to growth, though not as much as DPS.

Neither the state or Denver’s SPF is completely correlated to the demographics to the schools, but the state’s SPF ratings more strongly correlate to the demographics of the school and in particular the poverty level of the student population. This makes it difficult to determine how much a school may be impacting learning regardless of what students bring to school. The DPS SPF is much less correlated with student demographics, arguably making it a better tool for determining a school’s impact on student learning.

The DPS SPF has far more measures than the state SPF which makes it harder to understand. DPS also has many more measures like early literacy assessments for grades K-2, and an achievement gap measure along with student engagement metrics. Some of these measures are problematic in terms of incentivizing growth of the measure like taking “college courses” rather than incentivizing true college readiness.

Also, the DPS SPF is not transparent. The public cannot currently see how the basic measures are translated into scores that roll up into the final rating or color-coding.

Given the discussion at both the state and Denver district levels, we believe the time is right for Denver Public Schools to once again set the standard for how to ensure we have accurate and transparent measures of school quality. DPS’s current SPF has been undermined by misleading measures, too many measures with too many changes over time and little transparency.

The recently formed Quality Schools Task Force, Superintendent Cordova and ultimately the DPS Board of Education, must make sure the new SPF returns to a trusted and valued indicator of whether students will learn to read. The cost to students and our entire community is far too great if they do not step up to deliver.