by Peter Huidekoper Jr., Coordinator, Colorado Education Policy Fellowship Program, St. John’s College, New Mexico

Remember the 3 R’s? Remember those days of yesteryear when our parents, grandparents, and other old fogeys thought it pretty important to be sure that, if nothing else, schools taught us reading, writing, and mathematics?

Yes, writing. Not the nebulous “language arts” or “literacy skills.” W-r-i-t-i-n-g.

Remember back in the day, 1997, when the state of Colorado–after establishing standards in eleven key disciplines in 1995[i]—began to assess how our students did in all the 3 R’s: at first for grade 4, adding grade 7 (1999), then grade 10 (2001) and by 2002 for all grades, 3-10. Although many other states early in the standards and accountability era, especially after No Child Left Behind became law in 2002, limited their tests to reading and math, Colorado (3 cheers!) did not; “all grades were tested in Reading, Writing, and Math.”[ii] Annual reports revealed how students performed as readers, and as writers. Different skills. The results, too, were markedly different.

Since 2015, using the new PARCC assessments, we have continued to ask students to demonstrate their reading and writing skills in the English Language Arts test. But for reasons I fail to understand, the state no longer presents a breakdown on the different results for these two vital disciplines, not as it did from 1997-2014. We just see one English Language Arts (ELA) score. Why?

When the state told us

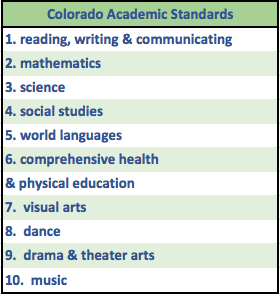

Mom, Dad: is your student proficient in reading but not in writing? Schools, teachers: is there a significant gap in the number of your students who perform at grade level in reading versus writing? Colorado taxpayers who pay for most of our state’s K-12 public education, while you might like to see how our students are doing in all ten of the Colorado Academic Standards (see sidebar): wouldn’t you especially like to know if students are meeting the standards on “the R’s”: reading, writing, and mathematics?

Mom, Dad: is your student proficient in reading but not in writing? Schools, teachers: is there a significant gap in the number of your students who perform at grade level in reading versus writing? Colorado taxpayers who pay for most of our state’s K-12 public education, while you might like to see how our students are doing in all ten of the Colorado Academic Standards (see sidebar): wouldn’t you especially like to know if students are meeting the standards on “the R’s”: reading, writing, and mathematics?

In 2002, the state told us. On all three disciplines. The much lower percentage of students proficient in writing versus reading was telling. I was teaching 7th and 8th grade English then and studied the results that summer. Clear evidence that I—that we as a faculty—needed to do a better job as writing teachers.

| One teacher’s experience – the writing assessment on CSAP

Colorado was ahead of most states from 1997 to 2014 in giving our students tests that asked much more than merely “fill in the bubble.” CSAP required a substantial amount of writing. (This was one reason the scoring took so long and why the results weren’t available until August.) I taught in charter schools six of those years. I paid attention to the writing standards for the state (Addendum A) and our district. Each March I handed out the CSAP reading/writing assessments. Six sessions in all, an hour each. Two sessions were spent largely on a first and then a second draft of a longer written task. If not an ideal way to measure extended writing skills, still meaningful. When the scores came back, I found the information useful—especially when the 7th graders I taught the year before were now my 8th grade students. More work to do on organization and transitions, details and depth, etc. |

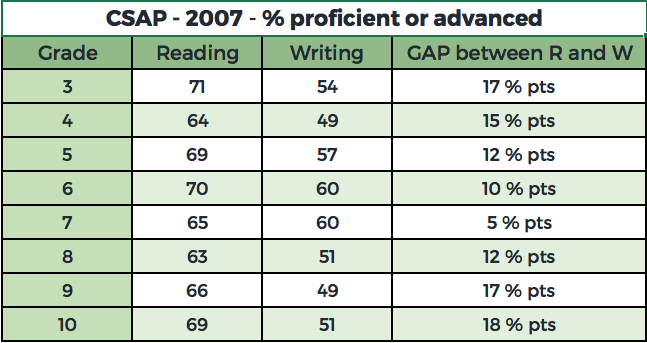

Ten years ago, the state told us. “State officials unveiled Colorado Student Assessment test scores Tuesday…” (The Denver Post – CSAP Roundup – by Jeremy P. Meyer, Aug. 1, 2007). I note here several grades where the gap was especially glaring.

In 2011, the final year Colorado used the CSAP test, the state told us:

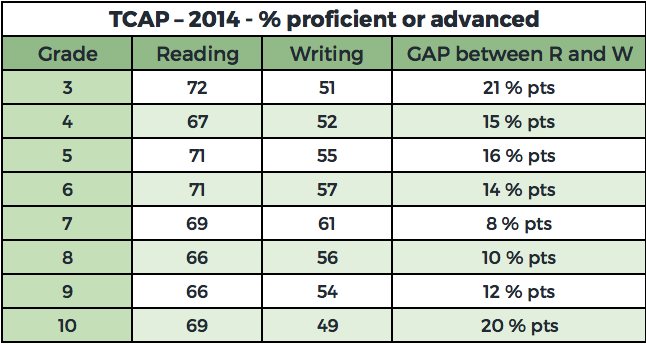

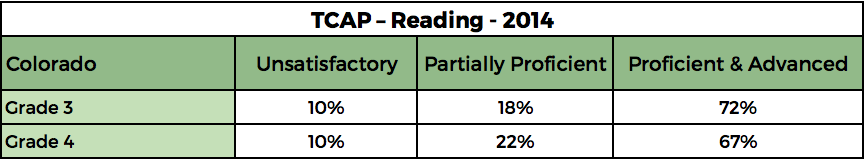

Over the following three years, using TCAP, the state continued to report the two scores. In 2014, the final year of TCAP, the state told us:

|

What the reading/writing gap can tell a school Good schools can use the LOWER writing scores to make a point to the entire teaching staff: to help our students write well, it is a responsibility of all academic teachers to pay attention to and encourage strong writing. English teachers have complained forever (yes, guilty!) about being the only faculty member talking with our students about good sentences and coherent paragraphs, about spelling, word choice, clarity—about good writing. We benefit when the school’s leaders make this a priority across the board. Social studies and science teachers, especially, need to be writing teachers too. This data can lead schools to realize how much more we need to do help our students write well. |

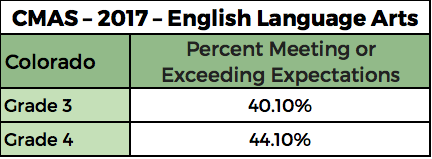

PARCC/CMAS – 2015-2017 – one ELA score

However, for the past three years, while the English Language Arts component of CMAS (Colorad o Measures of Academic Success) tests reading and writing skills, in announcing and releasing the results the Colorado Department of Education only presents the ELA score. (CDE’s “highlights from the 2017 assessment results” notes the “Improvement on CMAS English language arts assessments.”

o Measures of Academic Success) tests reading and writing skills, in announcing and releasing the results the Colorado Department of Education only presents the ELA score. (CDE’s “highlights from the 2017 assessment results” notes the “Improvement on CMAS English language arts assessments.”

[iii] ) Do we see the gap between the two disciplines evident for the previous 15 years? No.

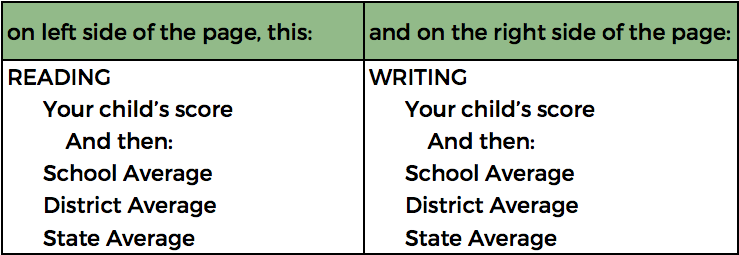

The irony is that a breakdown–of a kind–does come to every school, and every parent of a student in grades 3-8. When they receive the 2017 PARCC results, the state lets them know. Mom and Dad see a page that, on one side, shows results for the English Language Arts/Literacy Assessment; How did (child’s name) perform overall? Performance Level (1-5); and a graph showing the Percentage of students at each performance level (1-5). On the back, under the heading, “How Did Your Child Perform in Reading and Writing?”–we see …

So there it is, the district and state average—one for reading, one for writing. True, not revealing proficiency—instead, what CDE terms “Average Scale Score”—and difficult for most parents to decipher. But public information. Even if not presented when CDE releases the PARCC/CMAS scores to the public.

I asked CDE why the public did not see distinct reading vs. writing results. Answers from staff shifted.

CSAP/TCAP had separate reading and writing tests with established performance levels, which is why the state posted percent proficient and advanced for them separately. CMAS has a single ELA test with established performance levels; so percent met and exceeded expectations is available for ELA only. (July 28)

Here is one paragraph from a longer email of a few days later (the full response is in Addendum C):

The state will not report out at the subclaim level. If the state were to report out on subclaims, then people would believe that they could compare across years, and that is not an appropriate use of the scores. For appropriate uses, please see page 31 of the CMAS and CoAlt Interpretive Guide: http://www.cde.state.co.us/assessment/2017cmascoaltinterpretiveguide (Aug. 1)

I studied that Interpretive Guide and quoted it (see 2.2 …) in my next reply; I also noted I had seen the very breakdown I refer to above on the pages schools and parents receive. So I had my doubts.

2.2 Performance Levels and Types of Scores on the Student Reports

Student performance on the Colorado assessments is described at varying levels on the individual student reports using scale scores, performance levels, subclaim performance indicators, and percentile ranking. State, district, and school average results are included in relevant sections of the report to help parents understand how their student’s performance compares to that of other students. (Bold mine.)

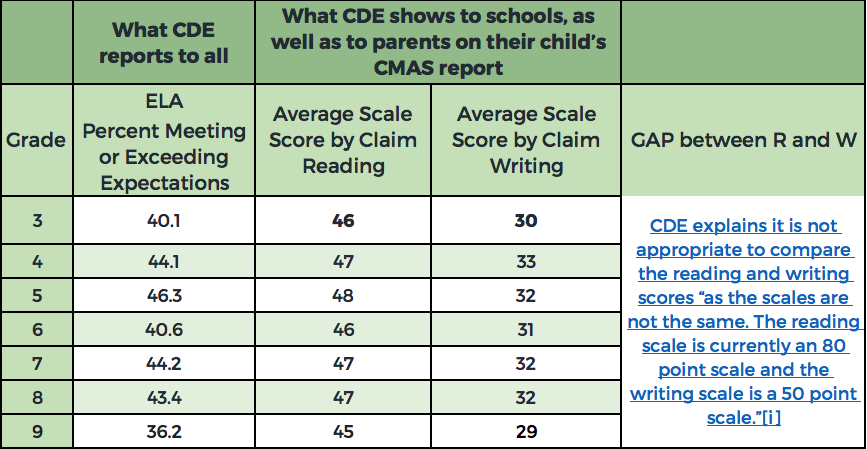

That’s when—on August 23—CDE did release the different scores to me, adding this:

As you point out, the subscale information is available to parents and educators. Although we do not post standard level information for any of the assessments for reasons we have already shared, the state-level information is publically [sic] available. Please see below for the 2017 state level average subscale scores for Reading and Writing.

Which I show here, side by side with the ELA figures (I add) that the state features in all its presentations on CMAS results.

CMAS PARCC – Spring 2017 Achievement Results – COLORADO

Differences in reading vs. writing results

With some digging, then, CDE will tell you. But why not tell us this from the very start? Over the next three weeks I then exchanged emails with Joyce Zurkowski, Executive Director of Assessment at CDE. Her words both clarified, and muddled, the issue for me. Are reading and writing results “subscores” in language arts, as physical science and earth systems are for science? Did policymakers, in shifting to PARCC, really think an ELA test would not assess and give us results on these two distinct disciplines? And—try to stay with me now—can we say a 46 average scale score in reading, for third graders, on an 80 point scale, is better than a 30 average scale score in writing on a 50 point scale? Maybe not! Confused?

I leave it to others to articulate better questions and to make a stronger case than I have done. I am convinced the Colorado Department of Education should provide a breakdown of the information as to how well our students perform in 1) reading and 2) writing, much as it did from 1997 to 2014.

I believe it as a terrible step backwards not to have this data.

Another View is a newsletter by Peter Huidekoper Jr. Comments are welcome. 303-757-1225 – peterhdkpr@gmail.com

Addendum A – Colorado Department of Education – Writing Standards – 1995

WRITING STANDARD 2

Students write and speak for a variety of purposes and audiences.

In order to meet this standard, students will

- write and speak for a variety of purposes such as telling stories, presenting analytical responses to literature, conveying technical information, explaining concepts and procedures, and persuading;

- write and speak for audiences such as peers, teachers, and the community;

- plan, draft, revise, proofread, and edit written communications;

- use a variety of devices such as figurative language, symbolism, dialect, and precise vocabulary to convey meaning;

- organize written and oral presentations using strategies such as lists, outlining, cause/effect relationships, comparison/contrast, problem/solution, and narration; and

- use handwriting and at the most appropriate time, word processing to produce a product that is legible.

GRADES 5-8

As students in grades 5-8 extend their knowledge, what they know and are able to do includes

- writing stories, letters, and reports with greater detail and supporting material;

- choosing vocabulary and figures of speech that communicate clearly;

- drafting, revising, editing, and proofreading for a legible final copy;

- applying skills in analysis, synthesis, evaluation, and explanation to their writing and speaking;

- incorporating source materials into their speaking and writing (for example, interviews, news

articles, encyclopedia information);

- writing and speaking in the content areas (for example, science, geography, history, literature),

using the technical vocabulary of the subject accurately; and

- recognizing stylistic elements such as voice, tone, and style.

WRITING STANDARD 3

Students write and speak using conventional grammar, usage, sentence structure, punctuation, capitalization, and spelling.

In order to meet this standard, students will

- know and use correct grammar in speaking and writing;

- apply correct usage in speaking and writing;

- use correct sentence structure in writing; and

- demonstrate correct punctuation, capitalization, and spelling.

GRADES 5-8

As students in grades 5-8 extend their knowledge, what they know and are able to do includes

- identifying the parts of speech such as nouns, pronouns, verbs, adverbs, adjectives, conjunctions, prepositions, and interjections;

- using correct pronoun case, regular and irregular noun and verb forms, and subject-verb

agreement involving comparisons in writing and speaking;

- using modifiers, homonyms, and homophones in writing and speaking;

- using simple, compound, complex, and compound/complex sentences in writing and speaking;

- punctuating and capitalizing titles and direct quotations, using possessives, and correct

paragraphing in writing;

- using prefixes, root words, and suffixes correctly in writing and speaking;

- expanding spelling skills to include more complex words;

- demonstrating use of conventional spelling in their published works; and

- using resources such as spell checkers, dictionaries, and charts to monitor their spelling accuracy.

Addendum B – Good data for grades 3 and 4 on Reading, and the READ Act (HB-1238)

Many of us are extremely grateful for the additional state resources committed by HB 1238, the 2012 Colorado READ Act—over $35 million a year in 2014, 2015, and 2016[v]—to help K-3 boys and girls identified as “students with a significant reading deficiency.” Naturally, we are eager to see if this major commitment is producing more 3rd and 4th graders reading at grade level – not, admittedly, its central purpose, but surely an outcome to be wished for. While those implementing the READ Act use other assessments, at some point we would surely love to see the benefits in the state assessment of reading for all 3rd and 4th graders.

In 2014, the final year of TCAP results, we could look at this information.

Policymakers, parents, and everyone implementing the READ Act must be especially keen to see if HB-1238 is proving helpful in districts where (according to the 2014 TCAP) 40% or more of the third graders were not proficient readers. Such as in these five districts:

This is another example of where PARCC/CMAS ELA results from CDE do not tell us what we need to know. Everyone focused on the successful implementation of the READ Act gets nothing from this:

To see if more of our students are meeting expectations in READING at grade level, we need the results for … READING!

Addendum C – From the Colorado Department of Education

Email response to me from CDE staff on August 1:

The district reports are confidential because suppression rules have not been applied. A district may have less than 16 students in a grade/category reported on the district report. State and federal privacy laws limit information that can be shared to protect personally identifiable information (PII). We want districts to be able to see all of their information, but we cannot make that information public without suppressing much of it.

The writing assessments (via PARCC) measured both reading and writing standards within the Prose Constructed Response (PCR) items. There were always at least two reading standards being measured in each writing task in the test. So, the assessment gets a little more complex there (as compared to CSAP which didn’t have any reading standards assessed in the writing task). In fact, the rubric says “Reading Comprehension and Written Expression” which makes that component of the assessment a little less distinct. Students are demonstrating their comprehension of the reading THROUGH their writing about the text. That expectation in the assessment is aligned with the Standards; the writing standards ask students to be able to “develop the topic with relevant, well-chosen facts…concrete details, quotations”; the reading standards ask students to “delineate and evaluate the argument and specific claims in a text, assessing whether the reasoning is sound and the evidence is relevant….” So, students have to “read like writers” and analyze what the writers of a text are doing AND they have to write after doing close reading of texts.

The state will not report out at the subclaim level. If the state were to report out on subclaims, then people would believe that they could compare across years, and that is not an appropriate use of the scores. For appropriate uses, please see page 31 of the CMAS and CoAlt Interpretive Guide: http://www.cde.state.co.us/assessment/2017cmascoaltinterpretiveguide

[i] “Passed in 1993, House Bill 93-1313 initiated standards based education Colorado. The statute required the state to create standards in reading, writing, mathematics, science, history, civics, geography, economics, art, music and physical education. The statute also originated the Colorado student assessment program in 1996.” Colorado Department of Education – http://www.cde.state.co.us/standardsandinstruction/cas-historyanddevelopment. (Bold mine. See Addendum A for part of the 1995 writing standards.)

[ii] http://www.wow.com/wiki/Colorado_Student_Assessment_Program?s_chn=76&s_pt=source2&s_gl=US&v_t=content

[iii] http://www.cde.state.co.us/communications/20170817assessmentnewsrelease

[iv] email to me from CDE, 9/19/17.

[v] http://www.cde.state.co.us/coloradoliteracy/readresources